Scratching the AI itch: an optimist's reaction to a new digital reality

and how Aristotle, skepticism, and faith in human creativity might unlock our greatest capacities

The AI boom feels a lot like when TikTok came out.

Everyone is talking about it—

Do you use it?

Do you like it?

I heard it’s lame.

I heard it’s awesome!

I’m going to start using it.

I hate it

I love it

Re: TikTok—you had some people with thousands of “followers,” who figured out how to reach millions of people. Some people who used it passively, even quietly, scrolling on their lunch break without a peep.

Likewise, today, you have AI superstars who want to share how much it supercharges their life, their job, their soul.

Others, slowly opting to ask cGPT or Gemini what they can make with everything in their fridge, or for help on their History class essay.

And of course, the portion of the population that hates even the mention of it—bemoaning the loss of thinking and empathy that AI will necessarily give rise to.

Rarely do we see such a massive uptake of technology across generations, communities, and ways of life. And of course, along with the uptake, we see the skepticism, fear, and in extreme cases loathing of AI, for what sometimes feels like no other reason than because it is new and different.

I’m very biased, as a technologist that builds AI products, but I am also a cautiously optimistic one—one that takes the inherent moral questions of what and how we build very seriously.

In the same way that I was slow to TikTok because of my skepticism, and then ultimately and fundamentally changed because of it—changed by the creativity, the humor, the education, and the deep connections that I wholeheartedly believe are possible on the platform. I see an opportunity for our world to be fundamentally changed by the rise of AI, if we can learn lessons from earlier technological shifts, and approach this one with humility, safety, and wellbeing at the forefront of the charge.

So I can empathize with the AI-skepticism, but I take it, and raise you a slice of hope.

It’s not just a platform

I want us to make better use of AI than we did TikTok.

For it to have a better “rap” than TikTok did when it first came out (see: it’s just a bunch of kids dancing) and than it does today (see: it’s just a social media platform)—I believe TikTok can be so much more, but that is for another day.

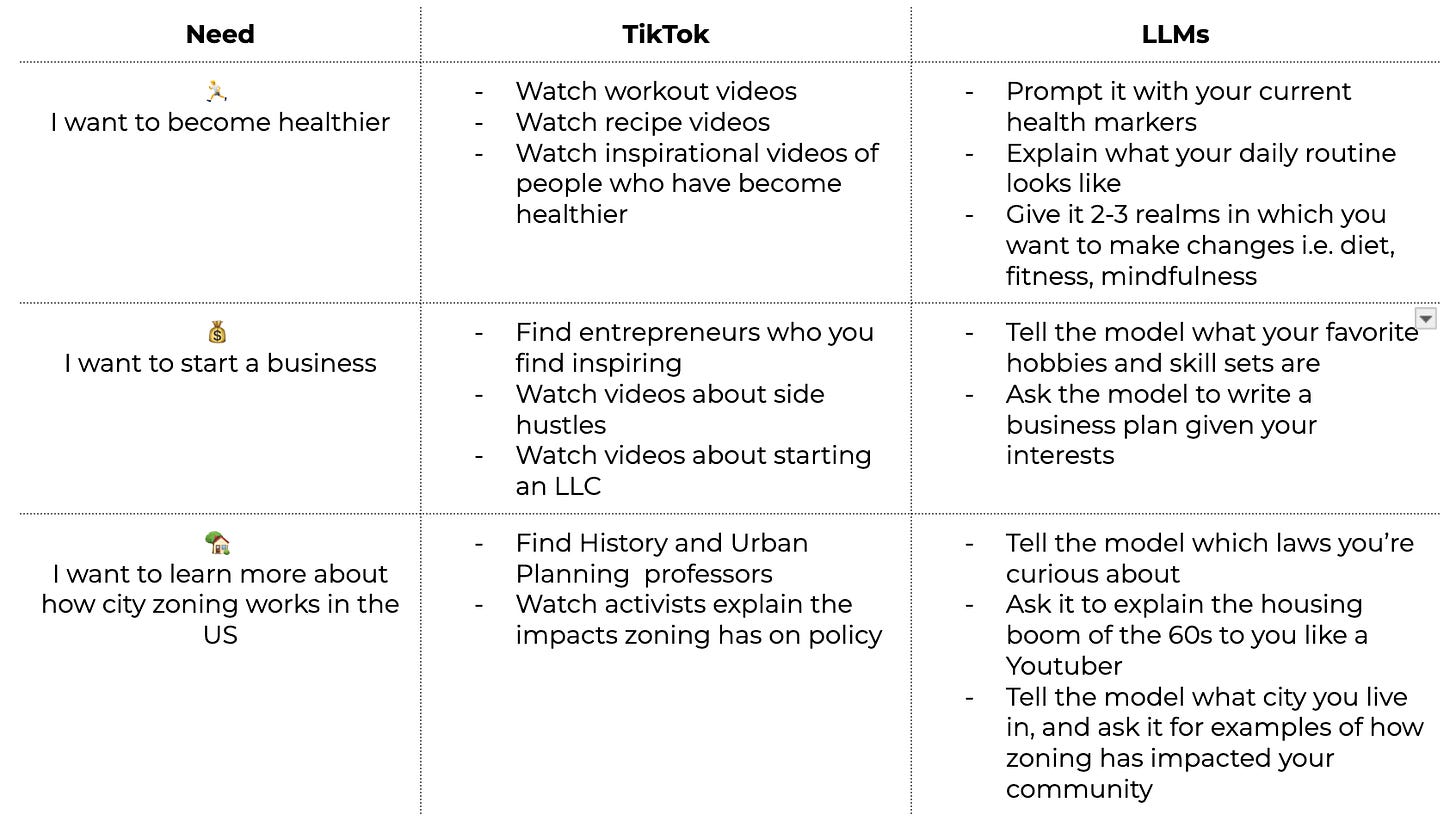

AI, and specifically Large Language Models have the power to help us think, learn, accomplish—much like TikTok does—but they can do so in a more personal and tailored way than we’ve ever had access to

This last case, Learning, is the one that i’m most fascinated by. The one where I see the most potential for an overall net positive impact on us. Not only are models good at summarizing, fact finding, and explaining—but they can be leveraged as a thought partner, interlocutor, or even trusted teacher.

Of course there are important realms that LLMs cannot touch, the aspects of learning that are inherently social or communal. The aspects that Tocqueville states can be achieved “by no other means than by the reciprocal influence of men [and women] upon each other.”

Hiring a personal trainer, employing the wisdom of a seasoned mentor, or attending your local town hall, are all resources that are still available to us, and should still be in your arsenal.

The human to human realm is still important. Period.

But imagine a world where we recruit the human elements and support them with the power of large-scale synthesis.

Where you can better sustain the workout regimen your trainer prescribed because you understand more about your unique physiological makeup.

You share your business plan with your mentor weeks earlier because you weren’t starting from a blank slate.

And you bring probing questions to your local town hall because you deeply understand the policy proposals after an LLM explained them in terms you could grok.

There isn’t a handbook for how to wade through the messiness of where AI is or isn’t appropriate. We’re going to have to experiment and learn and push boundaries, go too far, and ultimately find our way to what is right.

The bad stuff

With that said, there are no delusions here. We have to acknowledge the potential pitfalls and dangers that naturally arise from the use of new technology.

As the physical distance between us shortens through near instant modes of communication, and the speed at which we do more, consume more, and produce more increases, I think we run the risk of losing the textures of life found in slowness, resistance, and challenge.

Could the ease of using chat bots, or the convenience of employing an agent lead to laziness or complacency?

It could.

Do we stand to cheapen the value of intellectual perseverance that wrestling with a long primary source text teaches us?

Possibly.

But it is not a foregone conclusion that we lose these values entirely, and more likely that the way they manifest will evolve.

I think that the way we evaluate skills like reading comprehension and research will need an update. Rote memorization and recall aren’t going to cut it where LLMs are involved, so how can we encourage and measure applied knowledge?

Media and Digital literacy becomes vital. Understanding how to navigate, discern, and consume information online requires:

healthy skepticism

openness to fact checking

demanding the highest standards for accuracy and grounding.

Hallucinations are real, and our awareness of their potential is imperative to responsible digital engagement.

Raw human creativity is far from “on its way out” in a world where we still enjoy the tactile experience of going to museums or concerts or the theater.

Moreover, when creativity employs both our human faculties and technology, we get entirely new modes of entertainment like fictional AI character conversations and co-creating personalized stories with and for your children.

A Provocation

As with most aspects of life, our approach to AI doesn’t have to be all or nothing. We can be excited by the good stuff, and wary of the bad stuff. Aristotle considered the mean between extremes the most virtuous place to be and the place from which we derive maximum flourishing.

“…but to feel them at the right times, with reference to the right objects, towards the right people, with the right motive, and in the right way, is what is both intermediate and best, and this is characteristic of virtue.” Chapter 6, Nicomachean Ethics, Aristotle

Directed towards the right goals, with the right guidance, and applied at the right times, AI can be transformative.

It isn’t lost on me, that getting all of those right, is exactly where the challenge lies. Our capacity for exploration is deep, and the internet deeper still. So will some people stumble into extreme usage of these tools, resulting in extreme applications?

Abso-freaking-lutely.

But the capacity for extremes shouldn’t scare us away from achieving the powerful golden mean. It should embolden us to find that middle ground, and when we do, demand that the people and organizations creating these tools circumscribe them with the right constraints so that more of us can operate freely within that moderate space.

I am a firm believer that the appropriate use of Large Language Models can become a way to deepen your experience of the world—not only a way to make finding and synthesizing information more efficient, but a way to explore your own thoughts in a way that has never been possible.

Consider one of the many questions you likely ask yourself throughout the day

I wish I knew more about ..

I wonder why…

How does ….apply to me?

Try using an LLM to brainstorm, to ask questions, and to learn. Once you’re comfortable conversing with it, try uploading the last few articles or podcasts that had you in a chokehold, maybe even a few pieces of your writing, and ask it to understand your interests and curiosities. With that understanding, it can help you find areas of deeper questioning and exploration that align with the ideas that have been swirling around your brilliantly unique head.

You might find a new research question, essay prompt, or dinner conversation laying dormant among this web of ideas, waiting to be tapped and shared with your world.

With great power, comes great responsibility

Building for learners, specifically students, is a privilege and a responsibility I'm excited to undertake. How we blend pedagogical principles with rapidly evolving technology, to serve the next generation of global citizens is a task I do not take lightly.

How we learn to learn, to acquire knowledge, to make sense of the world, is a critical and deeply human endeavor. But in the same way that we might have asked for faster horses instead of dreaming up the automobile, I think that we don’t yet grasp the leaps and bounds that our capacities will make as we barrel into the AI frontier. But we’re starting to!

To be honest, sometimes I envy students today.

With the possibilities of making more vivid connections to their school material and engaging with the texts and characters of our shared history in new ways.

Teachers can make curriculum even more tailored and accessible, and administrations can meet students exactly where they are and offer more nuanced mechanisms for retention and mastery.

The world is their oyster.

Imagine uploading the syllabi for all of your classes, and asking a model to help make connections across all of the material you stand to learn that year.

Identifying the places where your readings may overlap or be related. Generating a timeline for how the theorems you’re learning in Algebra and Chemistry were discovered in the same era as the people you’re reading about in History class.

Highlighting the intersections where you might find interesting nuggets of personal interest or inspiration.

And then sending you a custom podcast that helps you study and share those nuggets with your friends and classmates.

It sounds like Sci-fi, but I don’t think it is anymore.

This world of deep learning and reflection can be anyone’s oyster. With a bit of caution and a lot of curiosity, I think we’re just scratching the surface.

Insightful!